Bug #15552

Updated by Eregon (Benoit Daloze) about 7 years ago

Specifically, the image generated is incorrect.

When modifying the footer of the benchmark like this:

```ruby

alias printf_orig printf

def printf *args

$fp.printf(*args)

end

File.open("ao.ppm", "w") do |fp|

$fp = fp

printf("P6\n")

printf("%d %d\n", IMAGE_WIDTH, IMAGE_HEIGHT)

printf("255\n")

Scene.new.render(IMAGE_WIDTH, IMAGE_HEIGHT, NSUBSAMPLES)

end

undef printf

alias printf printf_orig

```

Here is the expected image:

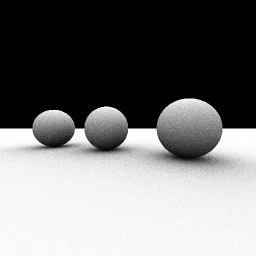

I get this image with MRI 2.6.0:

And interestingly, TruffleRuby 1.0.0-rc11 renders an image closer to the expected one:

I guess this might be both an interpreter bug, and possibly also a bug in the benchmark code. source bug.

I think every benchmark should have some validation, otherwise it's prone to measure something unexpected like this.